Intro

Many words have been spoken about RT systems and their supremacy over typical

systems in specific fields. But should we trust their name? Real-Time?

As

usual, the answer is no. RT system just trying to be what they’re called, that

means, react to triggers like interruptions and response immediately. More often

it’s just not later than the strictly defined time, called latency.

Strategy

So how can we measure the ‘quality’ of our rt system? Let’s say we want a task to be woken up after 200 us. To this task, we can use a timer which will generate interruption when the time runs out and wake up the task. Before we put the task to sleep lets get and remember the current time in some kind of variable.

- The start point of measuring kernel latencies is when the interrupt occurs.

- The First thing kernel must do is observe it and while there is a lot to do and to observe even that part can take some time.

- After that, relevant ISR is called to handle interruption(in our case to wake up the task)

- Next goes the kernel scheduler whose job is to manage all of the processes working in the system. When our task has been woken up, it was placed on the CPU queue indeed. So now it’s the scheduler thing to handle the task ASAP.

- When it’s time come, CPU starts to process the task and we can end measuring the latency.

After that, we can once again get the current time, subtract it from the time before we put the task to sleep, and get the latency by subtracting time difference with the time given to timer.

The given example is the exact methodology of the

cyclictest

test program for testing system latencies which will be using here by us.

Additional load

To simulate a stressful environment for the system we’re testing tools like

hackbench

and

stressapptest

can be used.

-

Hackbench- tool for stressing kernel scheduler by creating pairs of threads communicating with each other via sockets -

Stressapptest- program for generating a realistic load of memory, CPU, and I/O by creating a specified amount of threads writing to memory, to file, or communicate with given IP server

Testing platform

Tests will be performed on an i.mx8 platform with two builds of yocto-linux. A Regular version and a specially configured as RT using rt patch.

Tests cases

To get a complete system’s characteristic at least a couple of tests with a different load must be performed. To get fully reliable results, a test’s time must take at least a couple of hours. Although in our case to demonstrate results each test will take around 1 hour

Test sheet:

-

CASE 1:

cyclictestwith no load -

CASE 2:

cyclictestwithhackbenchsending 128B data packages -

CASE 3:

cyclictestwithstressappteston 2 memory threads testing 256MB of memory -

CASE 4:

cyclictestwithstressappteston 4 memory threads testing 256MB of memory -

CASE 5:

cyclictestwithstressappteston 8 memory threads testing 256MB of memory, 4 I/O threads and 4 network threads

Results

-

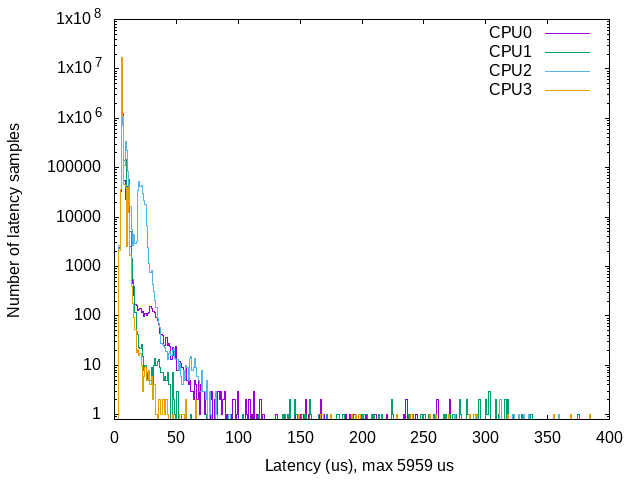

CASE 1: (No load) Regular Build

-

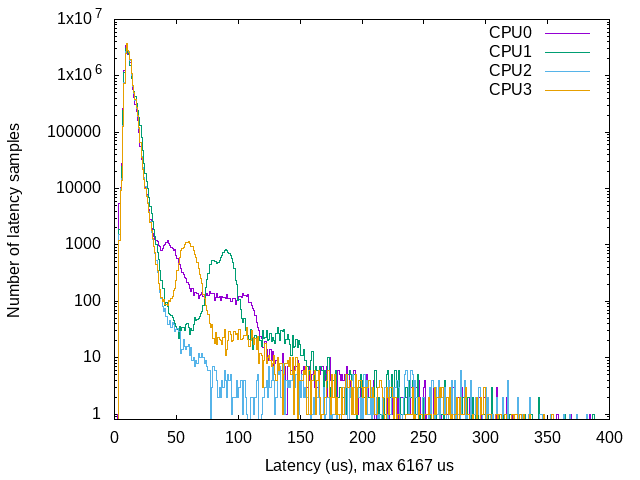

CASE 2: (with hackbench) Regular Build

-

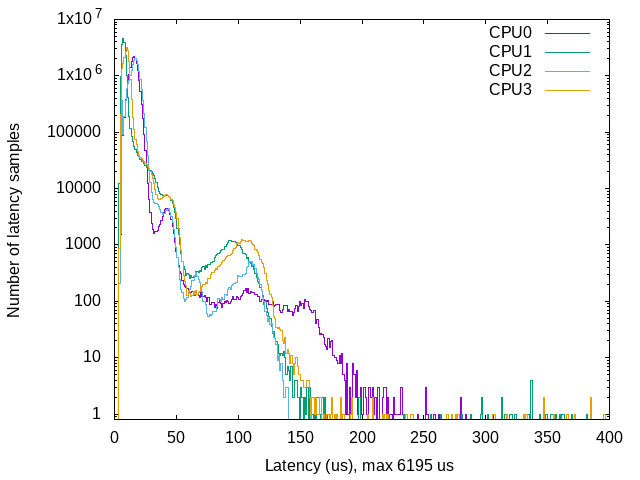

CASE 3: (light stresstest) Regular Build

-

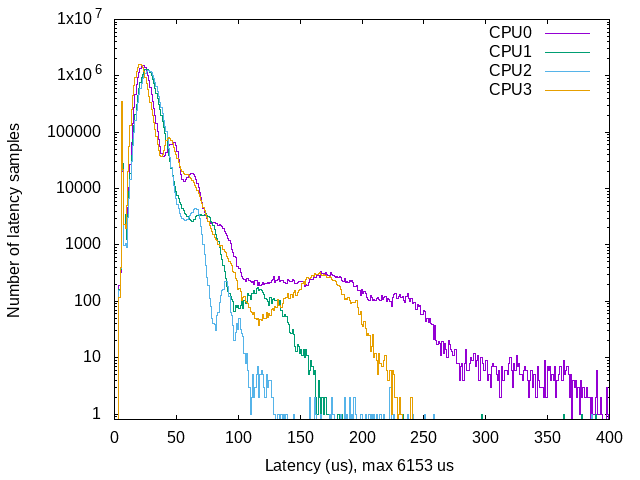

CASE 4: (medium stresstest) Regular Build

-

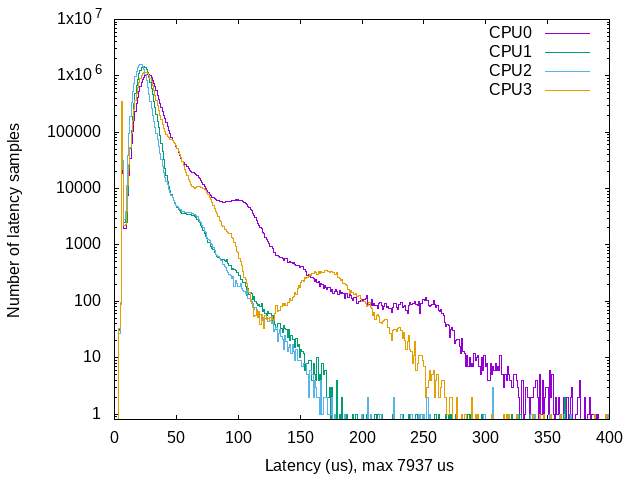

CASE 5: (hard stresstest) Regular Build

| Maximum latency | Case 1 | Case 2 | Case 3 | Case 4 | Case 5 |

|---|---|---|---|---|---|

| RT | 71us | 97us | 172us | 157us | 176us |

| Actual | 5959us | 6167us | 6195us | 6153us | 7937us |

Conclusions

As expected differences in these builds are huge. Even with no load regular build seems to not care about such thing as latency. Delays around 6ms of processing are almost visible to a human eye so there is no talking about considering regular build in rt-requiring projects. On the other hand, RT build yocto despite much better results, may also cause problems. Let’s say in our project we use an external device that collecting data samples, save them, and sending an interrupt to our main board to collect with 8KHz frequency or higher, that means every 125 us. If interrupt handling latency will be bigger than 125 us data on the external device will be overwritten by next and lost.

Summary

RT systems are a blessing for some projects, but we shouldn’t take for granted that they will solve our problems. We must verify if that exact system meets our needs. I hope that after this post you will be aware of latencies and always have in mind to be a little obtrusive about testing everything you can.

If you think we can help in improving the security of your firmware or you are

looking for someone who can boost your product by leveraging advanced features

of used hardware platform, feel free to book a call with

us or

drop us email to contact<at>3mdeb<dot>com. And if you want to stay up-to-date

on all things firmware security and optimization, be sure to sign up for our

newsletter: