The Importance of test automation

It is common understanding that software, or – in our case – firmware must be thoroughly tested before releasing it to the public. Without doing that we risk leaving security vulnerabilities, user-experience destroying bugs and less optimal performance. While it may seem obvious what should be done, more specific glimpse proves that there is more than one possible approach to testing and the choice clearly determines the further results. The question is how testing should be performed, to provide the most valuable output in the least amount of time, instead of clumsy and dangerous release. In this blogpost we will try to narrow down the distinction to the core, as comparing manual and automated testing. The former is the simpler method and does not add the cost of writing code, so may be performed immediately. The later is based on long term thinking about the problem, where scripts perform the tests without human participation. And let’s be clear – such investment into writing comes with many further benefits.

3mdeb validation approach

How are manual tests different from automated tests? Manual testing is based on performing the role of a standard user – no code is written. For obvious reasons, the whole process takes much longer and, although initially it does not require large investments, results in much lower ROI, due to the costs related to human resources. In some cases, manual testing is an option worth considering, for example when performing usability, or in no-scenario tests. However, in reproducible tasks, like functional tests, only the automation will bring the expected results.

Our validation infrastructure in 3mdeb consists of ~150 tests. Performing the whole testing process step by step would take days, especially that the whole process is repetitive. Summarizing, manual testing in this case costs more and slows down the whole development pace, when great delay occurs between code release and feedback for the programmers. If we would still try to test manually, our knowledge of code quality would be worse and the delivery would be delayed.

The whole process may be performed in a much more convenient way. Tests can be run by the developer, who needs only a brief information about the testing environment. Clear documentation and ready-to-use physical platforms with system of automatic availability management make it possible to run both, the single tests and complete suites, concerning a set of functionalities that must be checked. In result we receive immediate feedback about the changes made and the features that were added.

Remote Testing Environment

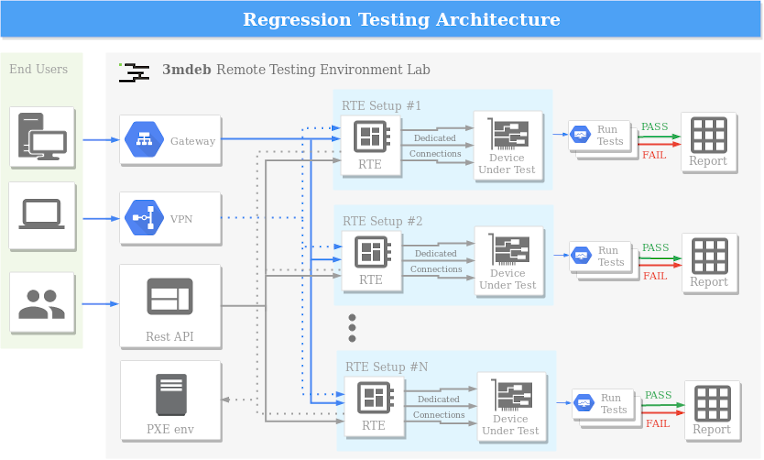

As we are performing our test on hardware we had to found a way to automate management and operations on physical platforms. To meet that goal we have created Remote Testing Environment - a Orange Pi Zero hat that gives us ability to remotely control power, simulate pressing of buttons like power on or reset, read serial output and flash firmware using physical cable connection that can unbrick device without any manual intervention. This setup has a corresponding configuration file for every platform model which allow for smooth remote test execution. Sounds interesting? Try rte tag on our blog. You can also check it in our shop.

An automated testing infrastructure can be paired with constant integration and delivery pipeline, to enhance the overall performance. We successfully use such approach when testing our projects. It all begins when most recent changes for the release are ready in our core repository and marked as a release candidate. From this point, the automatic process of building usable binaries begins, signing it with authenticity proving keys, testing, and delivering the final product to the customer, as an online ready-to-download file. Because these time-consuming tasks are performing automatically, it leaves developers more time for truly creative work on delivering the highest quality of product.

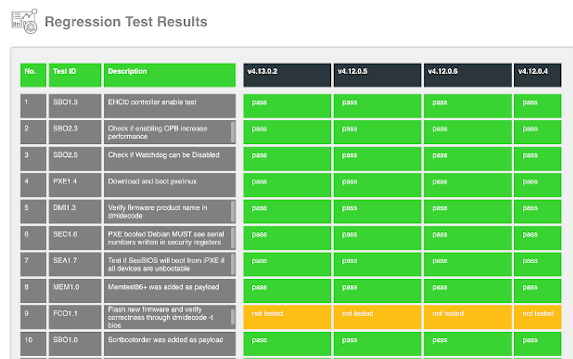

Test verification process needs understanding of the tested functionality and further interpretation of the results. In case of the automated testing, the output provides result and definition for getting the necessary information for users, clients and developers. When the complete regression testing results for the given release are ready, the next step is to make them accessible and easy to understand.

Why is it so important?

Trust and confidence are critical to the success of a firmware product. There are two main methods for achieving it: transparency (users can see how the testing model is performed) and hard validation (users can track how deeply the product is tested). The greatest result brings joining those two methods. Publishing the results of hard validation gives the purest quality proof of the product deliverable. This is how we are performing in 3mdeb, to describe our products as secure and optimized.

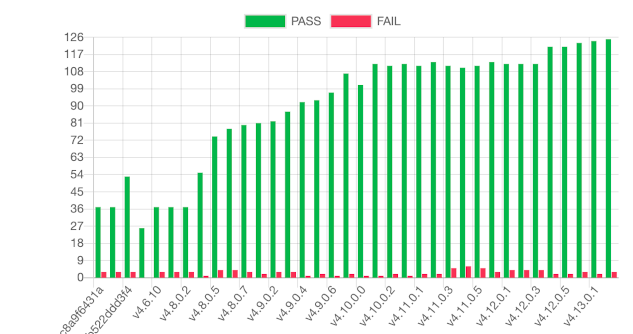

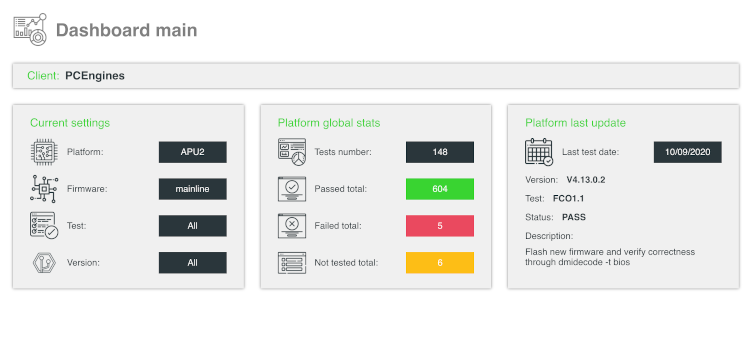

Usually the testing process is ended when the results are made available for public eyes. We could end this post here as well, but there is something more. For the analysis it is important, how the tests are presented. Usually the results are stored in the charts from publicly available spreadsheets, serving the informing role only. This process can be far improved and automated. This is exactly what we did. While waiting for our automated tests to be processed, we have created Dasharo Transparent Validation. System, that automatically presents the results in a clear way. It has many available filters to customize the view for presenting the exact data needed, with support for charts embeddable on a website or repository readme notes. You will hear more about Dasharo Transparent Validation soon.

Summary

If you think we can help in improving the security of your firmware or you looking for someone who can boost your product by leveraging advanced features of used hardware platform, feel free to book a call with us or drop us email to contact@3mdeb.com. And if you want to stay up-to-date on all things firmware security and optimization, be sure to sign up for our newsletter: